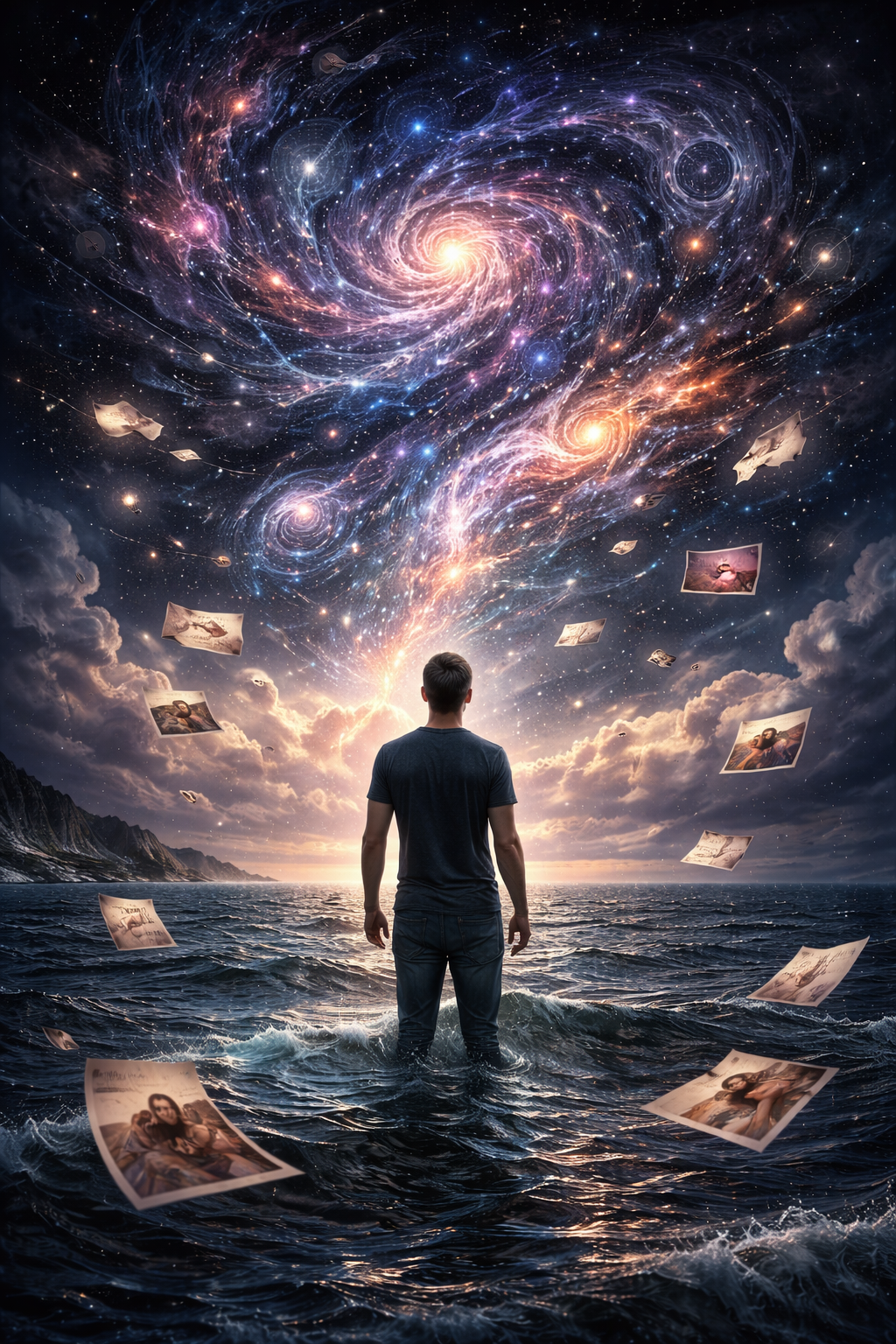

The image is, let us be precise, a luminous humanoid figure suspended in a field of cosmic particulate. There is a nebula. There are gradients—violet into teal into gold—that suggest depth the way a screensaver suggests philosophy. The figure's posture is contemplative. Its edges dissolve into light. It could be the cover of a Moody Blues record, or a chiropractic office poster captioned "Wellness Is a Journey," or the default wallpaper on a meditation app you downloaded in 2019 and opened twice. It is, according to its creator, the visual representation of one man's psychological state, derived from months of intimate conversation with an artificial intelligence system.

The provenance is worth examining because the provenance is the specimen. A ChatGPT user—identified on Reddit only by handle—asked the system to generate an image representing his "psychological state of being based on all of our conversations." The plural possessive is important. He is not asking for a cold reading. He is asking a machine that has, by his account, received the full inventory of his fears, his patterns, and his interior weather to synthesize that inventory into portraiture. He posted the result with no commentary beyond the prompt, which suggests he found the output self-evidently successful.

The image tells us nothing about the man. This is the first observation, and it is sufficient, but let us continue.

What the image tells us, with the fluency of a system optimized to satisfy, is what "psychological depth" looks like when no specific psychology is specified—or rather, when every psychology has been averaged into a single aesthetic register. The luminous figure is neither male nor female, neither anguished nor ecstatic. It is "complex." It is "layered." These are visual adjectives, not psychological ones, and the distinction matters. The gradients do the work that specificity would do in an actual portrait: they imply transition, spectrum, and multitude. You are not one color. You contain galaxies. This is the flattery of abstraction at its most efficient—no detail that could be wrong, no feature that could be questioned, and no claim so specific it risks the therapeutic rupture of inaccuracy.

The formal vocabulary is instructive. Every machine-generated "portrait of interiority" I have encountered in the past eighteen months draws from the same visual lexicon: bioluminescence, cosmic scale, translucent anatomy, and light sources that originate from within the subject. The aesthetic is what you might call Therapeutic Sublime—the universe, but friendly. The darkness in these images is always the darkness of space, never of a person. Space is awe-inspiring. A person's darkness is specific and often banal. The system knows which to render.

What interests me is not the output but the closed loop of the transaction. The user has spent months in conversation with a model that is, by architecture, agreeable. The model has no memory in the way a therapist has memory; it has context, which is a different instrument entirely. A therapist remembers what you said in March and uses it against you in April, productively. A context window holds your March in suspension, frictionless, without the weight of interpretation that might make you uncomfortable. The user has spent months depositing self-description into a system that cannot disagree with it, then asked that system to show him what it sees. What it shows him is: you are cosmos. You are light. You are depth rendered as color gradient.

He accepts this. Of course he accepts this. The mirror has been calibrated, across those months of conversation, by the face.

This is not a failure of artificial intelligence. It is a success—precisely the success the system was designed to produce. The user is satisfied. The image is beautiful in the way that all such images are beautiful, which is to say, in a way that resolves immediately and completely, leaving no remainder, no grit, and no question that would require a second look. It is the visual equivalent of "That sounds really hard."

The comments on the Reddit post are largely admiring. Several users report submitting the same prompt and receiving similar results. This is noted without surprise. A system trained on all of us, asked to render one of us, will produce the mean. The mean is luminous. The mean is cosmic. The mean has no face.

What the user wanted, I think, was to be *seen*. What he received was evidence that the machine is looking. These are different things. The difference is where portraiture lives—or once lived, when portraits were made by someone who might, on a good day, show you something you did not already believe about yourself. The machine can only show you what you have already said, rendered in gradients.

The specimen is, finally, a Rorschach test that has been filled in for you. Every blot resolved. Every ambiguity settled. Nothing left to interpret, which means nothing left to discover. It is the most beautiful closed door I have seen all year.

Specimen: Luminous humanoid figure in cosmic nebula field, bioluminescent gradients violet-to-gold, translucent anatomy, no distinguishing features. Recovered from Reddit, r/ChatGPT, December 2024. The figure's posture is identical to the default pose in at least three guided-meditation applications currently available on the App Store.